Author: Vitalik; Translator: Deng Tong, Golden Finance

Special thanks to Justin Drake, Hsiao-wei Wang, @antonttc and Francesco for their feedback and review.

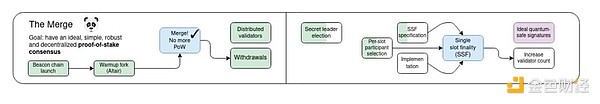

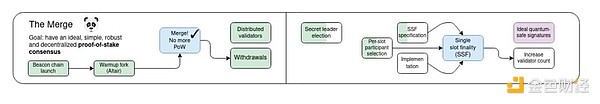

Originally, "merge" referred to the most important event in the history of the Ethereum protocol since its launch: the long-awaited and hard-won transition from proof-of-work to proof-of-stake. Ethereum has now been a stable and running proof-of-stake system for nearly two years, and this proof-of-stake has performed very well in terms of stability, performance, and avoiding centralization risks. However, there are still some important areas for proof-of-stake to improve.

My roadmap for 2023 divides it into several parts: improving technical features such as stability, performance, and accessibility to smaller validators, and economic changes to address centralization risks. The former took over the title of "merge", while the latter became part of "scourge".

This post will focus on the “merging” part: what else can be improved in the technical design of proof-of-stake, and what are the paths to achieve these improvements?

This is not an exhaustive list of improvements that can be made to proof-of-stake; rather, it is a list of ideas that are being actively considered.

Single-slot finality and democratization of staking

What problem are we solving?

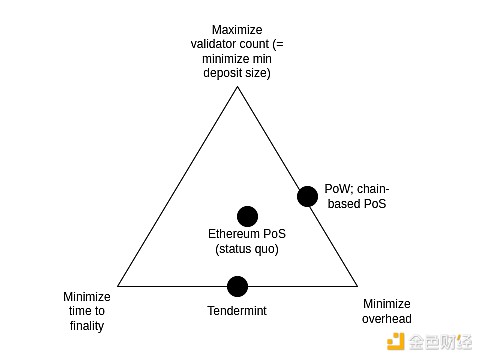

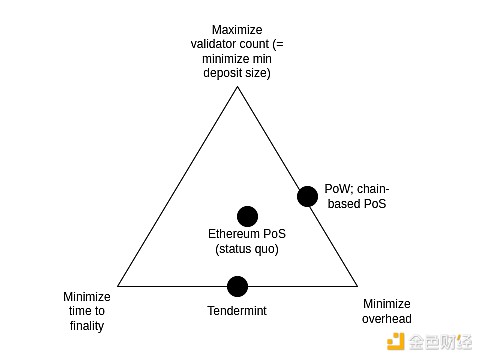

Today, it takes 2-3 epochs (~15 minutes) to finalize a block, and it takes 32 ETH to become a staker. This was originally a compromise to strike a balance between three goals:

Maximize the number of validators who can participate in staking (which directly means minimizing the minimum ETH required to stake)

Minimize finalization time

Minimize the overhead of running a node

These three goals conflict with each other: In order to achieve economic finality (i.e. an attacker would need to destroy a large amount of ETH to revert a finalized block), every validator needs to sign two messages for each finalization. So if you have many validators, it either takes a long time to process all the signatures, or you need very powerful nodes to process all the signatures at the same time.

Note that this all depends on a key goal of Ethereum: ensuring that even a successful attack is costly to the attacker. This is what the term "economic finality" means. If we don't have this goal, then we can solve this problem by randomly selecting a committee (such as Algorand does) to finalize each slot. But the problem with this approach is that if an attacker does control 51% of the validators, then they can attack (revert finalized blocks, censor or delay finalization) at a very low cost: only some of the nodes in the committee can be detected as participating in the attack and punished, either through slashing or a few soft forks. This means that the attacker can attack the chain over and over again many times. So if we want economic finality, then a simple committee-based approach won’t work, and at first glance it seems we do need the full set of validators to participate.

Ideally, we’d like to preserve economic finality while improving on the status quo in two ways:

The first goal is justified by two goals, both of which can be seen as “bringing Ethereum’s properties in line with those of (more centralized) performance-focused L1 chains”.

First, it ensures that all Ethereum users benefit from the higher level of security achieved through the finality mechanism. Today, most users don’t have that guarantee because they’re not willing to wait 15 minutes; with single-slot finality, users can see their transactions finalized almost immediately after they confirm. Second, it simplifies the protocol and surrounding infrastructure if users and applications don’t have to worry about the possibility of a chain rollback (barring relatively rare cases of inactivity leaks).

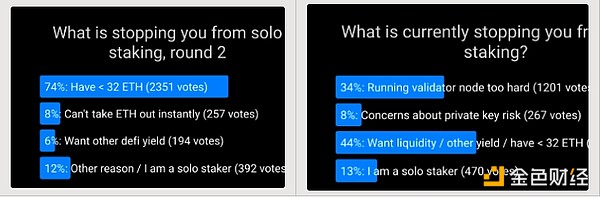

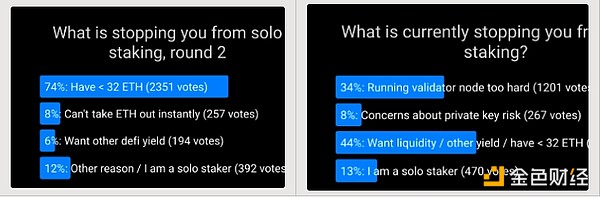

The second goal is motivated by a desire to support solo stakers. Poll after poll has repeatedly shown that the primary factor preventing more people from staking solo is the 32 ETH minimum. Lowering the minimum to 1 ETH would address this issue to the point where other issues become the primary factor limiting solo staking.

There is a challenge: both the goals of faster finality and more democratized staking conflict with the goal of minimizing overhead. In fact, this fact is the entire reason we didn’t go with single-slot finality in the first place. However, recent research suggests some possible ways to address this issue.

What is it and how does it work?

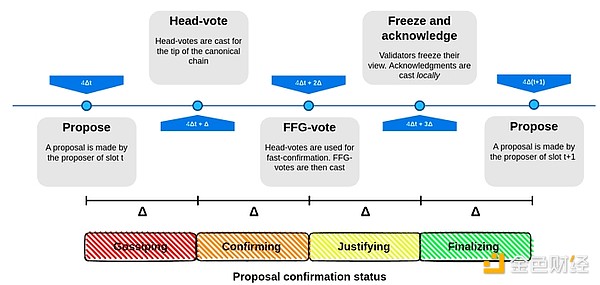

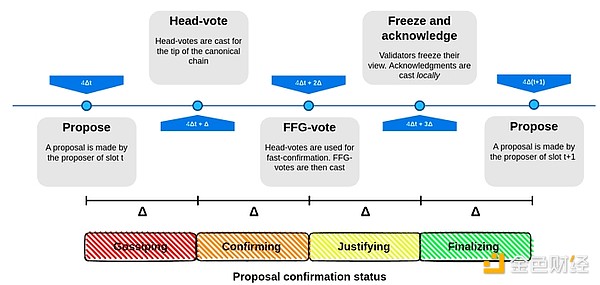

Single-slot finality involves using a consensus algorithm that finalizes blocks within one slot. This is not in itself an unattainable goal: many algorithms (such as Tendermint consensus) already implement this with optimal properties. One desirable property unique to Ethereum that is not supported by Tendermint is inactivity leaks, which allows the chain to continue running and eventually recover even if more than 1/3 of validators are offline. Fortunately, this wish has been fulfilled: there are proposals to modify Tendermint-style consensus to accommodate inactivity leaks.

Leading Single-Slot Finality Proposal

The hardest part of the problem is figuring out how to make single-slot finality work with very high validator counts without incurring extremely high node operator overhead. To this end, there are several leading solutions:

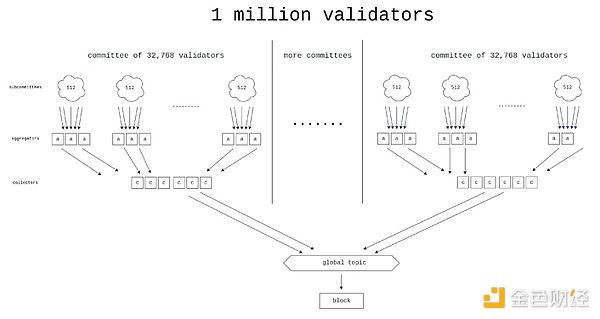

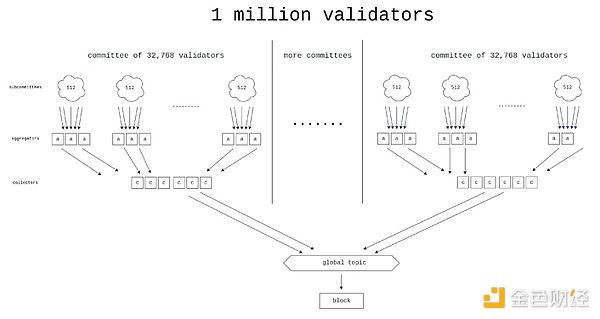

Horn, one of the proposed designs for a better aggregation protocol.

Option 2: Orbit Committees - A new mechanism that allows randomly selected medium-sized committees to be responsible for finalizing the chain, but in a way that preserves the attack cost properties we are looking for.

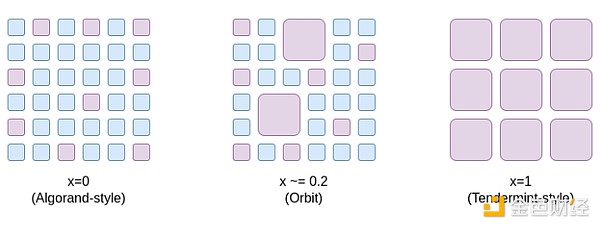

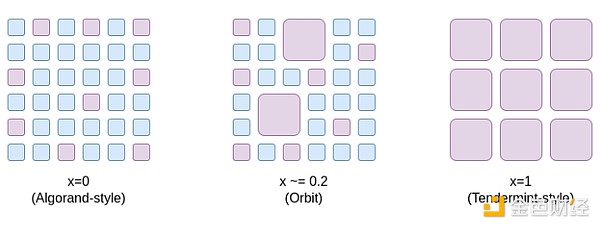

One way to think about the Orbit SSF is that it opens up a space of compromise options ranging from x=0 (Algorand-style committees with no economic finality) to x=1 (Ethereum status quo), opening up a point in the middle where Ethereum still has enough economic finality to be extremely secure, but at the same time we gain the efficiency advantage of only requiring a medium-sized random sample of validators to participate in each epoch.

Orbit exploits the pre-existing heterogeneity of validator deposit sizes to obtain as much economic finality as possible while still giving small validators a corresponding role. In addition, Orbit uses slow committee rotation to ensure a high overlap between adjacent quorums, thereby ensuring that its economic finality still applies to committee rotation boundaries.

Option 3: Two-tier staking - a mechanism where stakers are divided into two classes, one with a higher deposit requirement, and one with a lower deposit requirement. Only the tier with the higher deposit requirement would directly participate in providing economic finality. There are various proposals as to what exactly the rights and responsibilities of the tier with the lower deposit requirement would be (see, for example, the Rainbow staking post). Common ideas include:

Rights to delegate stake to higher-level stakeholders

Randomly drawn lower-level stakeholders prove and need to complete each block

Generate rights for inclusion in the list

What is the connection to existing research?

Path to Single Slot Finality (2022): https://notes.ethereum.org/@vbuterin/single_slot_finality

Specific proposal for Ethereum Single Slot Finality protocol (2023): https://eprint.iacr.org/2023/280

Orbit SSF: https://ethresear.ch/t/orbit-ssf-solo-staking-friendly-validator-set-management-for-ssf/19928

Further analysis of Orbit style mechanisms: https://notes.ethereum.org/@anderselowsson/Vorbit_SSF

Horn, the signature aggregation protocol (2022): https://ethresear.ch/t/horn-collecting-signatures-for-faster-finality/14219

Signature merging for large-scale consensus (2023): https://ethresear.ch/t/signature-merging-for-large-scale-consensus/17386?u=asn

Signature aggregation protocol proposed by Khovratovich et al.: https://hackmd.io/@7dpNYqjKQGeYC7wMlPxHtQ/BykM3ggu0#/

Signature aggregation based on STARK (2022): https://hackmd.io/@vbuterin/stark_aggregation

Rainbow Staking: https://ethresear.ch/t/unbundling-staking-towards-rainbow-staking/18683

What’s left to do? What are the tradeoffs?

There are four main possible paths (and we can also take hybrid paths):

(1) means doing nothing and keeping staking as is, but this would make Ethereum’s security experience and staking centralization properties worse than they would otherwise be.

(2) Avoid “high-tech” and solve the problem by cleverly rethinking the protocol assumptions: We relax the “economic finality” requirement so that we require that attacks are expensive, but accept that the cost of an attack might be 10 times lower than it is today (e.g., $2.5 billion instead of $25 billion). It is widely believed that Ethereum’s economic finality today is far beyond what it needs to be, and its main security risks are elsewhere, so this is arguably an acceptable sacrifice.

The main work is to verify that the Orbit mechanism is secure and has the properties we want, and then fully formalize and implement it. In addition, EIP-7251 (increase maximum valid balance) allows voluntary validator balances to be merged, which immediately reduces chain verification overhead and serves as an effective initial stage for the launch of Orbit.

(3) Avoid clever rethinking and instead use high-tech to brute-force the problem. To do this requires collecting a large number of signatures (more than 1 million) in a very short time (5-10 seconds).

(4) avoids clever rethinking and high tech, but it does create a two-tier staking system that still has centralization risks. The risks depend heavily on the specific rights granted to the lower tier of stakers. For example:

If lower tier stakers are required to delegate their attestation power to higher tier stakers, then delegation may become centralized and we end up with two highly centralized tiers of stake.

If a random sampling of lower tiers is required to approve each block, then an attacker can spend a very small amount of ETH to prevent finality.

If lower tier stakers can only make inclusion lists, then the proof layer may still be centralized, at which point a 51% attack on the proof layer can censor the inclusion lists themselves.

Multiple strategies can be combined, for example:

(1 + 3): Use brute force techniques to reduce the minimum deposit size without single-slot finality. The amount of aggregation required is 64 times less than the pure (3) case, so the problem becomes easier.

(2 + 3): Do Orbit SSF with conservative parameters (e.g. 128k validator committee instead of 8k or 32k), and use brute force techniques to make it super efficient.

(1 + 4): Add rainbow staking without single-slot finality.

How does it interact with the rest of the roadmap?

Single-slot finality reduces the risk of certain types of multi-block MEV attacks, among other benefits. Furthermore, the prover-proposer separation design and other in-protocol block production pipelines need to be designed differently in a single-slot finality world.

The weakness of brute force strategies is that they make it harder to shorten slot times.

Single Secret Leader Election

What problem are we solving?

Today, which validator will propose the next block is known in advance. This creates a security vulnerability: an attacker can monitor the network, identify which validators correspond to which IP addresses, and launch a DoS attack on the validator when it is about to propose a block.

What is it? How does it work?

The best way to solve the DoS problem is to hide the information about which validator will produce the next block, at least until the block is actually produced. Note that this is easy if we remove the "single" requirement: one solution is to let anyone create the next block, but require that the randao reveal is less than 2256 / N. On average, only one validator will be able to satisfy this requirement - but sometimes there will be two or more, and sometimes there will be zero. Combining the "secrecy" requirement with the "single" requirement has always been a hard problem.

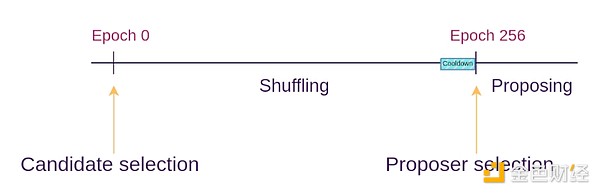

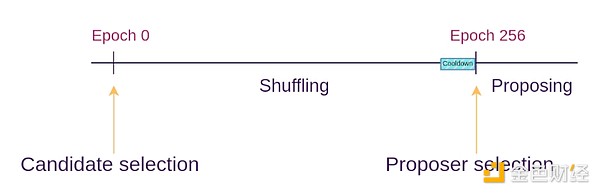

The single secret leader election protocol solves this problem by using some cryptographic techniques to create a "blind" validator ID for each validator, and then giving many proposers a chance to shuffle and re-blind the pool of blind IDs (this is similar to how mixnets work). At each epoch, a random blind ID is chosen. Only the owner of that blind ID can generate a valid proof to propose a block, but no one knows which validator that blind ID corresponds to.

Whisk SSLE Protocol

What are the links to existing research?

Dan Boneh’s paper (2020): https://eprint.iacr.org/2020/025.pdf

Whisk (Ethereum’s specific proposal, 2022): https://ethresear.ch/t/whisk-a-practical-shuffle-based-ssle-protocol-for-ethereum/11763

Single Secret Leader Election tag on ethresear.ch: https://ethresear.ch/tag/single-secret-leader-election

Simplified SSLE using ring signatures: https://ethresear.ch/t/simplified-ssle/12315

What’s left to do? What are the tradeoffs?

Really, all that’s left is to find and implement a protocol that’s simple enough that we can easily implement it on mainnet. We place a lot of importance on Ethereum being a fairly simple protocol, and we don’t want the complexity to grow further. The SSLE implementations we’ve seen add hundreds of lines of spec code and introduce new assumptions in complex cryptography. Finding a sufficiently efficient quantum-resistant SSLE implementation is also an open problem.

It may end up being the case that the “marginal additional complexity” of SSLE will only drop low enough once we introduce general-purpose zero-knowledge proofs on L1 of the Ethereum protocol for other reasons (e.g. state tries, ZK-EVM).

Another option is to not bother with SSLE at all, and instead use out-of-protocol mitigations (e.g. at the p2p layer) to address the DoS problem.

How does it interact with the rest of the roadmap?

If we add an attester-proposer separation (APS) mechanism, such as execution tickets, then execution blocks (i.e. blocks containing Ethereum transactions) will not require SSLE, as we can rely on specialized block builders. However, for consensus blocks (i.e. blocks containing protocol messages such as attestations, sections that may contain lists, etc.), we will still benefit from SSLE.

Faster transaction confirmations

What problem are we solving?

It would be valuable to see further reductions in Ethereum transaction confirmation times, from 12 seconds to 4 seconds. Doing so would significantly improve the user experience for both L1 and rollup-based blockchains, while making defi protocols more efficient. It would also make it easier for L2 to decentralize, as it would allow a large number of L2 applications to work on rollup-based blockchains, reducing the need for L2 to build their own committee-based decentralized ordering.

What is it and how does it work?

There are roughly two techniques here:

Reduce the slot time, for example to 8 seconds or 4 seconds. This does not necessarily mean 4 second finality: finality inherently requires three rounds of communication, so we can make each round of communication a separate block that will at least get tentatively confirmed after 4 seconds.

Allow proposers to issue pre-confirmations during the slot process. In the extreme case, proposers could include the transactions they see in their blocks in real time, and immediately publish pre-confirmation messages for each transaction ("My first transaction is 0×1234...", "My second transaction is 0×5678..."). The case where a proposer publishes two conflicting confirmations can be handled in two ways: (i) by slashing the proposer, or (ii) by using attesters to vote on which one is earlier.

What are some links to existing research?

Based on Preconfirmations: https://ethresear.ch/t/based-preconfirmations/17353

Protocol Enforced Proposer Commitments (PEPC): https://ethresear.ch/t/unbundling-pbs-towards-protocol-enforced-proposer-commitments-pepc/13879

Staggered Periods on Parallel Chains (2018 idea for low latency): https://ethresear.ch/t/staggered-periods/1793

What’s left to do, and what are the tradeoffs?

It’s not clear how practical it would be to reduce slot times. Even today, it’s difficult for stakers in many parts of the world to get proofs fast enough. Trying 4 second slot times runs the risk of centralizing the validator set, and makes becoming a validator outside of a few privileged regions impractical due to latency.

The weakness of the proposer preconfirmation approach is that it greatly improves average-case inclusion time, but not worst-case inclusion time: if the current proposer is functioning well, your transaction will be preconfirmed in 0.5 seconds instead of being included in (on average) 6 seconds, but if the current proposer is offline or functioning poorly, you will still have to wait a full 12 seconds for the next slot to start and a new proposer to come in.

In addition, there is an open question of how to incentivize preconfirmations. Proposers have an incentive to maximize their optionality for as long as possible. If provers sign off on the timeliness of preconfirmations, then transaction senders can make part of their fees conditional on immediate preconfirmation, but this places an additional burden on provers and may make it more difficult for provers to continue to act as neutral "dumb pipes".

On the other hand, if we don’t try to do this and keep finalization times at 12 seconds (or longer), the ecosystem will place more emphasis on pre-confirmation mechanisms enacted by Layer 2, and interactions across Layer 2 will take longer.

How does it interact with the rest of the roadmap?

Proposer-based pre-confirmation actually relies on attestor-proposer separation (APS) mechanisms, such as execution tickets. Otherwise, the pressure to provide real-time pre-confirmation may create too much centralized pressure on regular validators.

Other Areas of Research

51% Attack Recovery

It is often assumed that in the event of a 51% attack (including attacks that cannot be cryptographically proven, such as censorship), the community will come together to implement a minority soft fork, ensuring that the good guys win and the bad guys leak or are slashed due to inactivity. However, this level of over-reliance on the social layer is arguably unhealthy. We can try to reduce the reliance on the social layer and make the recovery process as automated as possible.

Full automation is impossible, because if it were, this would count as a >50% fault-tolerant consensus algorithm, and we already know the (very strict) mathematically provable limitations of such algorithms. But we can achieve partial automation: for example, clients could automatically refuse to accept a chain as final, or even refuse to accept it as the head of a fork choice, if it censors transactions that the client has seen for a long enough time. A key goal is to ensure that the bad guys in an attack cannot at least win quickly.

Increase the quorum threshold

Today, blocks are finalized if 67% of stakers support it. Some people think this is too aggressive. In the entire history of Ethereum, there has only been one (very brief) failure of finality. If this percentage were increased to 80%, the number of additional non-finality periods would be relatively low, but Ethereum would gain safety: in particular, many more contentious situations would result in a temporary halt to finality. This seems much healthier than the "wrong party" winning immediately, whether the wrong party is an attacker or the client has a bug.

This also answers the question of “what’s the point of solo stakers”. Today, most stakers already stake through pools, and it seems unlikely that a solo staker could ever get as high as 51% of the staked ETH. However, it seems possible for solo stakers to reach a majority-blocking minority if we try, especially if the majority is 80% (so a majority-blocking minority only needs 21%). As long as solo stakers don’t participate in a 51% attack (either with eventual reversal or censorship), such an attack won’t result in a “clean win”, and solo stakers will actively help prevent a minority soft fork.

Quantum Resistance

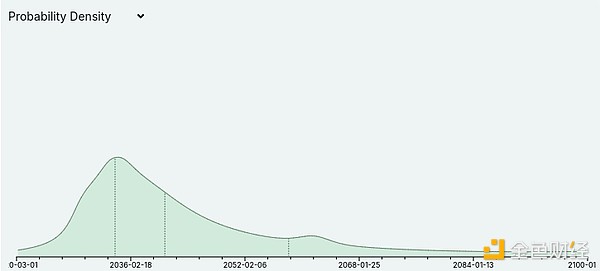

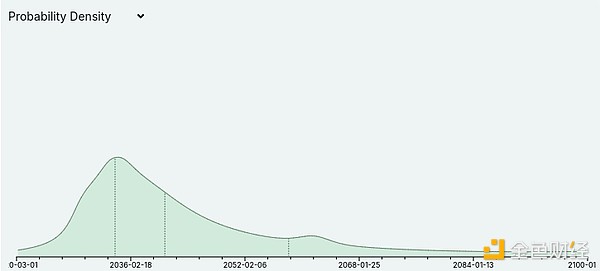

Metaculus currently believes that, albeit with a large margin of error, it is likely that quantum computers will begin to break cryptography sometime in the 2030s:

Quantum computing experts, such as Scott Aaronson, have also recently begun to take the possibility of quantum computers actually working in the medium term more seriously. This has implications for the entire Ethereum roadmap: it means that every part of the Ethereum protocol that currently relies on elliptic curves will need some kind of hash-based or other quantum-resistant alternative. This specifically means that we cannot assume that we will be able to rely forever on the superior properties of BLS aggregation to process signatures from large validator sets. This justifies conservatism in performance assumptions for proof-of-stake designs, and is a reason to more aggressively develop quantum-resistant alternatives.

Catherine

Catherine